Google’s Mangle, Nano Banana, and the New Wave of AI Agents: What You Need to Know

Swamped with security warnings, dependency chains, audit logs, and half-baked file formats? Welcome to the club. Your software stack is barking at you from 17 directions—and somehow you’re supposed to draw meaningful conclusions from it all.

Here’s the truth: LLMs can’t help you unless your data’s buttoned up.

That’s where Google’s new logic-layer tech (plus a few sneaky updates) comes in—to help make your stack not just smarter, but actually intelligible.

In this post, you’ll get:

- A crash course on Mangle—Google’s new reasoning engine for chaotic, structured data.

- The scoop on a mystery AI model turning heads in image generation.

- A breakdown of 5 new Gemini-powered agents that can turbocharge your cloud workflows.

Let’s dig in.

Turn Your Data Mess Into Answers with Google Mangle

You know the drill: log files here, SBOM reports there, CVE notifications piling up in a corner… No wonder security and compliance feel more like whack-a-mole than strategy.

Enter Mangle, Google’s shiny new logic layer for structured chaos.

It’s backed by Datalog (think: math-grade rules, but in code), and it lets you:

- Pull data from files, APIs, and databases—all into one unified graph.

- Write declarative queries to explain what’s going on, instead of spaghetti-scripting your way out.

- Embed it as a Go library with zero special setup. Lightweight, portable, practical.

What makes it different?

- Recursive rules follow relationships as deep as they go. Want to trace a vulnerability three hops upstream? Easy.

- Mix-and-match aggregations (like counts or sums) and external function calls—real-world logic meets symbolic reasoning.

- Designed to feed structured facts to AI agents, boosting reliability and transparency.

Where this thing really shines:

- Security: Prove (not guess) whether a CVE in Library C actually touches your production stack.

- Compliance: Scan thousands of software bills of materials (SBOMs) and flag unapproved packages—automatically.

- Enterprise graphs: Surface the hidden links between people, projects and assets in seconds.

Mangle doesn’t just clean up your data. It empowers your AI tools to reason with it.

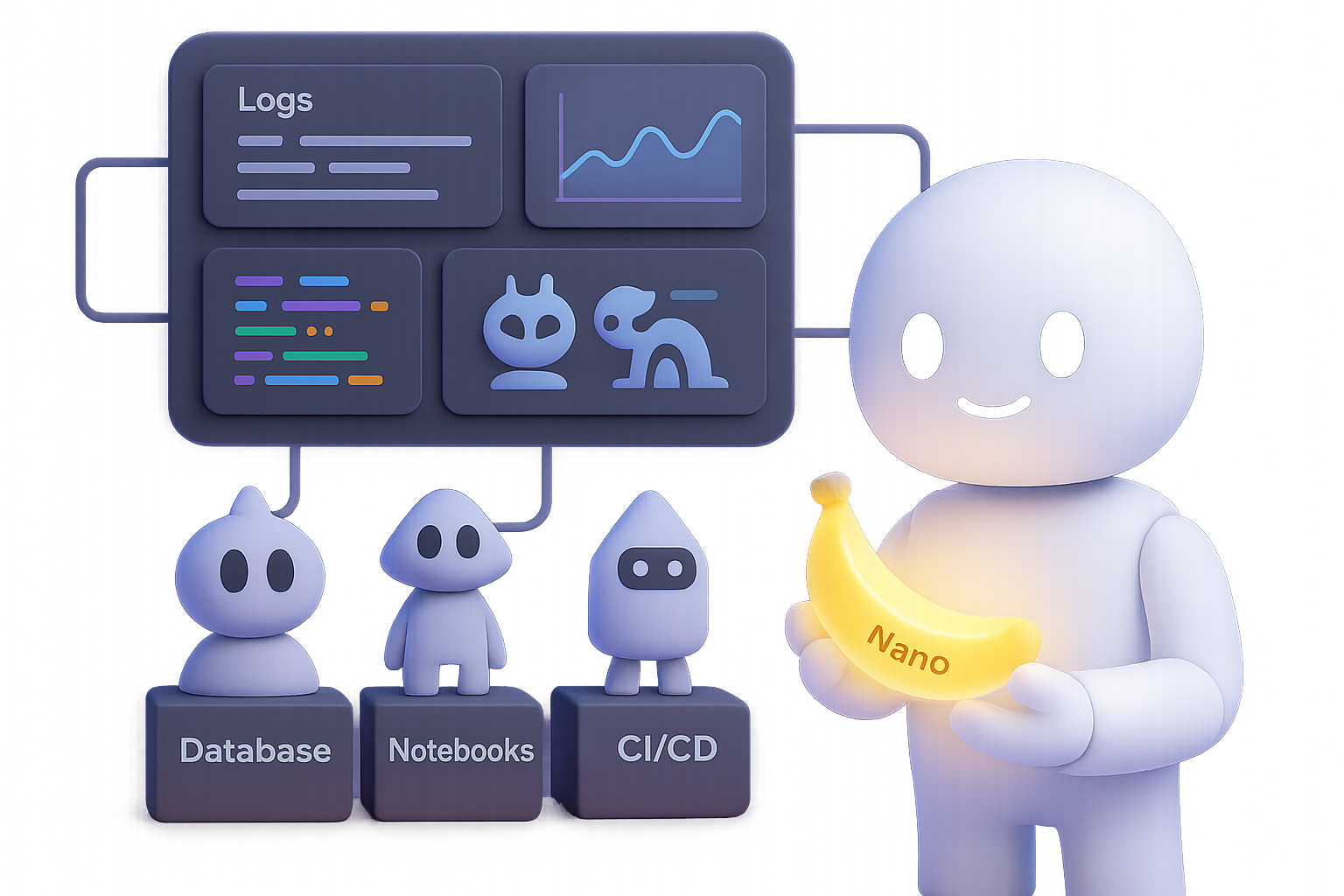

Meet “Nano Banana”: Mysterious Model, Serious Skills

While Mangle slipped quietly onto GitHub, something way weirder caught fire on LMSys Arena: a high-quality, uncredited image model called Nano Banana.

Yeah, that’s the name.

Here’s what testers noticed:

- Image outputs were sharp, creative—and in many cases, better than flagship models.

- Responded beautifully to edits like “replace the sky with sunset tones.”

- Still tripped on spelling in its images (a common AI hiccup), but overall felt premium.

So who’s behind it?

Nothing official yet, but all signs scream Google:

- “Nano” matches naming from Google’s Gemini team for their mobile-optimized models.

- Several Googlers have dropped banana emojis on X like digital breadcrumbs.

- Could be a teaser for a local-friendly AI generator launching alongside new hardware.

If Nano Banana is the real deal? We’re talking on-device, high-fidelity image generation—perfect for workflows where privacy, speed or offline access matters.

5 Gemini Agents That Actually Do the Work

Forget autocomplete. Google Cloud just dropped five purpose-built agents powered by Gemini that chop hours off workflows.

1. BigQuery Data Agent

- Describe your pipeline in plain English—it builds and monitors jobs for you.

- Schema change? No panic. The agent adjusts and keeps things running.

2. NotebookLM Enterprise Agent

- Lives inside NotebookLM. Runs analysis, builds baseline models, and writes team docs.

- Yes, it can engineer features for you. You’re not dreaming.

3. Looker Code Assistant

- Turn plain questions into SQL, charts, or Python snippets.

- Understands your data definitions because it sits on Looker’s semantic layer.

4. Database Migration Agent

- Reads legacy schemas, functions and stored procedures—then auto-converts to AlloyDB or Spanner.

- Live replication means your cut-over stays online and stress-free.

5. Gemini CLI for GitHub Actions

- Auto-labels PRs, suggests tests, and triages issues via the command line.

- Fully scriptable, so you control the rules of the road.

These aren’t just helpers. They’re capable of owning entire project phases—from ingestion to delivery.

Why This Matters

Google’s making big moves in AI, but this wave is less about flash and more about functionality.

Here’s the shift:

- Clean logic-layer reasoning (via Mangle) + scalable intelligence (via Gemini) = better decisions, fewer patch scripts.

- Fast transitions from data to output—whether that’s a compliance report or a migrated database.

- A viable AI-on-the-edge playbook: if Nano Banana becomes official, anyone can create high-end visuals—offline.

Let’s be real: LLMs are already impressive. But without structured, credible information to build on, they hallucinate. What Google is offering here are tools to anchor AI in reality—and get your time back in the process.

Cleaner Data, Smarter AI, Less Toil

Google’s Mangle brings logic to your mess. Nano Banana teases flagship image AI on local devices. Gemini agents handle the dull stuff, so you can actually move fast without breaking things.

Now it’s your turn: want to build smarter, faster AI workflows?

👉 Get started with AI foundations at Tixu – the beginner-friendly platform that helps you actually learn and ship with AI.

Leave a Reply